Introduction¶

In this chapter we introduce techniques to estimate the fair value of a financial instrument exploiting the pricing information available in financial markets.

Fair value is the price at which a financial instrument is economically equivalent, at a given time and under a given information set, to its future cash flows or payoffs, once transactions costs, opportunity costs and risk are appropriately accounted for

In this chapter we will focus on two conceptually different ways to estimate fair value. The first one assumes markets are efficient enough so that prices observed in the market are our best estimation of fair value, bar some idiosyncratic components coming from trading frictions like liquidity premiums, dealer spreads, and transaction costs. In this case, fair value estimation can be seen as a filtering problem, and we will study a simple albeit powerful model to carry out this task: the Kalman filter.

When financial instruments are highly illiquid or do not trade directly in organized markets, fair value estimation must rely on economic—often referred to as fundamental—valuation models. These models infer the value of a financial instrument from the future cash flows specified by its contractual structure. Central to this approach is the concept of the time value of money, which states that a payment received in the future is not economically equivalent to the same payment received today, due to opportunity costs. As a result, future cash flows must be converted into present values through the application of a discount factor, which renders cash flows occurring at different points in time comparable.

Time discounting, however, is not the only challenge faced by fundamental valuation models. Future cash flows are frequently contingent on information that is not known at the valuation date, such as future prices of financial assets or macroeconomic variables. Examples include dividends paid by a company or the value of the underlying asset referenced by a derivative contract. A simple, albeit theoretically naive, approach consists in valuing the instrument as the expected value of its future cash flows, treated as random variables. This approach, however, fails to account for heterogeneity in investors’ risk preferences. Once cash flows are stochastic, the realized return on the investment becomes uncertain, and uncertainty is not valued equally by all investors. To address this limitation, one can adopt a utility indifference pricing framework, in which risk preferences explicitly enter the valuation.

In many situations, particularly for illiquid flow instruments, this is the most refined valuation approach available. However, for the specific case of derivative instruments, stronger theoretical results can be obtained under additional assumptions. As shown by Fischer Black, Myron Scholes, and Robert C. Merton in the 1970s, it is possible to construct dynamic replication portfolios that reproduce the payoffs of a derivative using traded instruments. In such settings, the fair value of the derivative becomes independent of investors’ risk aversion, since any deviation from this price would give rise to risk-free arbitrage opportunities. This insight leads to the arbitrage-free pricing framework, which will be introduced in the final section of this chapter.

Finally, a unifying pricing framework that can accommodate both the risk-aversion profile of the investor and the arbitrage-free constraints is the stochastic discount factor pricing framework Cochrane, 2005, which we will briefly describe at the end of the chapter.

Filtering models for fair value estimation¶

As mentioned in the introduction, for financial instruments that are relatively liquid, we can aim at extracting all the pricing information from price indications and trades in the market, without having to resort to economic theories of fair value. In this setup, we consider as their fair value the one that market participants are willing to pay for.

The issue, though, is that price indications and trades cannot be considered themselves pure observations of fair value, since they might be affected by market frictions: bid ask spreads, particularities of the negotiation mechanism, liquidity fluctuations, specific needs of market participants at a given time, etc. When instruments trade in limit order books, a popular estimation of the fair value is using the mid-price, the arithmetic average of the best bid and ask. However, if bid-ask spreads are wide or liquidity is thin in the first levels, such estimation is not necessarily very precise. Trades provide a lot of information, since they are real transaction and not indications of interests, the larger they are in principle the more information. Still, they are subject to the aforementioned market frictions that reduce their reliability.

These make all these price observations noisy estimates of the fair value, so if we want to estimate a fair value out of them we need to be able to separate the signal from the noise, or in other words, filter those observations. This is precisely what, under certain model assumptions, a Kalman filter does.

The Kalman filter was introduced in the chapter on Bayesian Theory. It is a Bayesian filtering algorithm that allows to perform exact inference, i.e. compute the closed-form distribution, of the latent state vector in a Linear Gaussian State Space Model (LG-SSM).

Recall that a State Space Model (SSM) is a model to describe dynamic systems where we have a non or partially observable state, a vector , whose dynamics in time is described by a so-called transition equation:

where is a general function, are inputs (or controls) that affect the dynamics, is the time-step between observations, and is a transition noise with a given distribution. The state is observed indirectly via a proxy vector via the observation equation:

where is another general function and the observation noise, meaning that observations have a degree of uncertainty with respect to the latent space.

A Linear Gaussian Model (LGM) is a specific case of the SSM were both the transition and observation functions are linear and the noise terms are Gaussian. In this case, we can use the Kalman filter algorithm to compute the distribution of the state vector at any time, given the observations and the transition and observation model. If some or all the parameters of these models are not known, they can be estimated using standard techniques like Maximum Likelihood Estimation (MLE) or Expectation Maximization (EM) when the former becomes computationally intractable due to the latent state vector.

For non-Linear Gaussian Models, there are extensions of the Kalman filter that can be used:

Extended Kalman Filter (EKF): Extends the Kalman Filter to non-linear state space models by linearizing the dynamics and observation models around the current estimate using Taylor expansions.

Unscented Kalman Filter (UKF): Avoids linearization by using deterministic sampling to approximate the state distribution.

Particle filtering / sequential Monte Carlo: it uses directly Monte Carlo methods to find the posterior distribution of the fair value. See Gu'eant & Pu, 2018 for more details of this approach applied to the fair-price estimation problem in corporate bonds.

A simple pricing model¶

Let us consider a simple setup where we aim to infer the distribution of the fair value of a financial instrument that follows a random walk:

We don’t observe this fair value, only trades which we consider noisy observation of the mid since they include transaction costs and potentially other external factors like dealer inventory positions, etc:

Readers will recognize that this is the local level model discussed extensively in the Chapter on Bayesian Modelling. For the observation noise we can introduce prior business knowledge about the confidence we have on trade observations as a source of pricing information. In his Option Trading’s book Sinclair, 2010, Euan Sinclair describes a simple model that quantifies the information provided by trades based on the size of the trade, :

where is a baseline observation noise and is an input to the model, the trade size we believe saturates the information provided in the sense that our mid estimation will essentially move to the price of the trade. In contrast, trades of small size, , will have and will provide negligible pricing information. An alternative simple model is:

where in this case is a size scale that separates the regimes where the information provided by the trade is negligible, , or relevant , but it does not saturate for a specific trading size, as in Sinclair’s model. Of course nothing prevents to use more business prior knowledge to enrich the observation model with other observable characteristics of the trade or the wider market context.

Estimation of the simple pricing model¶

As discussed in Bayesian Modelling, the standard way to estimate the parameters of a Kalman Filter is using the Expectation Maximization (EM) algorithm, suitable for probabilistic models with latent variables. However, the properties of the simple pricing model can be exploited to obtain closed-form estimators for its parameters using moment matching.

Let us start by working with a model where observation errors have no dependency on the volume: . The key is to compute statistics of:

which depend only on observed trades. First we compute the variance:

where we have used that , and are independent random variables. This expression links the variance of the first differences in trade prices with the parameters to estimate. We need though a second expression to solve for each parameters separately. For that we compute the lag-1 auto-covariance of :

where again we have used the independence between the noise terms. Our estimators read then:

The results have an interesting interpretation:

Starting from the first equation: the variance of the fair value must be lower than the variance of the observed trades, given the additional noise in the observation equation. Or, in other words, since the fair value is filtered from the trades, which means that we estimate it by removing noise from the trades, it has to have a lower variance.

The second equation can be recognized as a form of the Roll estimator for effective bid-ask spreads, a typical measure of liquidity. Of course, by construction of the model, the noise that is filtered is essentially the bid-ask spread that liquidity providers request as a compensation for the liquidity provision.

In the case of volume dependent observation errors, we can still compute these statistics, which now read:

The statistical estimator of the variance of the first differences can still be used, by accounting by the variability of the error with volume (heteroskedasticity):

Moving into the 1-lag covariance, we have:

As far as we the volume dependency has a single parameter to fit, we can still use these two equations to solve for the parameters. If we use, for instance, the simple model , where is given and is to be estimated from data (notice that we could simply estimate , the factorization is useful for business interpretation):

In this simple case, the estimation of the parameters and is straightforward. More complex functions like the one from Sinclair cannot be estimated with only two moments. Further moments can be computed to provide extra equations, although at this point it might be worthy to resort to standard estimation techniques like EM if available.

Inference on the simple pricing model¶

We can use the general Kalman filter equations described in Bayesian Modelling to derive the distribution of our mid-price at the next time when a trade happens.

The Kalman filter algorithm operates sequentially over observation steps applying two steps, the predict step, where we compute the distribution of the fair value based purely on the random walk model, and the update step in which we incorporate the information provided by the observation of a new trade. We define as the distribution of the fair value at before observing the trade, and afterwards.

Let us apply first the predict step. The distribution of is Gaussian with mean and variance given by:

Since our model uses a drift-less random walk dynamics for the evolution of the fair value, the updated mean does not change and the variance increases proportionally to the time step .

Now we use the update step to incorporate the information from a trade happening at :

where is the Kalman gain, given by:

The updated mean is an interpolation between the predicted mean and the trade observation, weighted by the Kalman gain

If the observation noise is much smaller than the uncertainty in the mean in the prediction step, namely , the Kalman gain then tends to and , i.e. since our confidence on the information from the trade is much higher than our best estimation of the mean, we essentially update the mean with the trade price.

On the contrary, if , the Kalman gain then tends to and . In this case, our confidence on the information provided by the trade is very low, so essentially we ignore the trade information and use the predicted mean.

In between those two limiting cases, the updated mean combines the information from the prediction using the internal dynamics and our last update, and the trade information.

If we look at the new standard deviation, we also find similar limiting behaviors:

If , then , since as we discussed above, we essentially use the trade information to inform our estimation of the mid.

If , then , i.e. we stick with the estimation from the predict step

One interesting consequence of the optimality of the Kalman filter is that the updated standard deviation cannot be larger than the predicted one, and for any finite is always smaller: the information from the trade always contributes to improve our estimation of the fair value. This is easily seen writing:

Since is non-negative, then the denominator is never lower than 1.

In some applications of the local level model to pricing we might also be interested in the Kalman smoothing algorithm. Recall that the difference with the Kalman filtering we have just seen is that in smoothing we estimate the latent variable using all the available data, including the future. Of course this means Kalman smoothing does not make sense for online price inference, but there are other applications of this pricing model where using the best estimation of the latent fair value is relevant:

Estimation of parameters when using Expectation Maximization, as discussed in in Bayesian Modelling

Calibration of pricing models, for instance as we will discuss in the chapter on RfQ Modelling, when we seek to estimate the hit rate probability, or the probability that a client trades an RfQ from a dealer given the quoted price. In this case, we are interested in having the best estimation possible of the fair value to isolate the effect of the spread, which is the one that puts dealers in competition. In the same chapter we will also discuss models to evaluate the toxicity of clients’ flows, i.e. when clients seem to have more information than the dealer when trading, so the dealer seems to be later in the wrong side of the market. Estimating such models typically relies on analyzing how the fair value of the instrument moves before and after a client intends to trade.

Multiple observations of the same instrument¶

In many real pricing situations, we might have different sources that reveal information about the fair value of an instrument, for example trades in different platforms, information from composites, or pricing information derived indirectly from trading indicators like the hit&miss. We will explore later those sources in detail. If they happen asynchronously, we can just use the simple pricing model introduced in the previous section, adjusting the observation error depending on the pricing source.

If they happen synchronously, though, we need to expand the Kalman filter to cope with simultaneous observations. This requires to change the observation model to a system of equations:

We can compute the Kalman gain in this case, which is a matrix:

where:

is the total observation precision. Notice that, as a sanity check, in the case of a single observation we recover the Kalman gain derived in the previous section. For multiple observations, the update equation then reads:

The relative weight of influence of each observation depends on the fraction of the total variance that the observation variance represents, with more noisy observations having a smaller effect in the update.

Multiple correlated instruments¶

The Kalman filter model for pricing becomes even more relevant when we include information from other financial instruments that are historically correlated with the one whose fair value we are estimating. Typical situations are:

Instruments that are more liquid, i.e. they trade more often and with smaller bid and ask spreads. This allows us to improve the estimation of the fair value until we observe a new trade from the instrument, anticipating potential relevant movements derived from common market factors.

Instruments that trade in markets that are open when markets where our instrument of interest is traded are closed. This allows us to reduce the uncertainty from the overnight gap in trading data.

The simple pricing model we have analyzed so far can be easily extended to include information from a set of N instruments. Notice that in this case what we are actually doing is estimating the fair values of all the instruments in the set, not necessarily only the one of interest. The evolution of the fair values is now modelled using:

where is now a covariance matrix that takes into account the effect of correlations in the pricing movements. Price observations follow the model:

In this case, since we are already modelling correlations at fair value level, a typical choice is to take diagonal, i.e. , although in certain setups one might want to include some of form of bid-ask spread correlation between instruments.

With this model specification, we can directly use the filtering, smoothing and EM equations discussed in the Bayesian Modelling chapter. Let us though specifically focus on the case of instruments, where we can work out in detail the Kalman filter equations to get further insights into the model’s inner workings.

The predict step in the Kalman filter is given by the following equations:

which are relatively simple, as expected. The update step equations are more interesting, since they include the effect of observations, and critically the impact of one instrument’s trades into the fair value or the other:

with the Kalman gain being:

For the two instrument case, the Kalman gain can be expanded into:

Let us analyze some particular cases:

If we set the correlation to zero, , the Kalman gain becomes:

i.e. we recover the equations of the Kalman gain for a single instrument for each diagonal, and the update equation factors in two independent updates.

If we set , which simulates the case in which we don’t have an observation of a trade in the first financial instrument --by considering its observation error so large that it’s effect is negligible, the Kalman gain becomes:

The update equations for each instruments fair value read:

Notice that these equations are equivalent to the ones that we would derive if we use an observation matrix and compute the update step for arbitrary values of the parameters. Going into the results, the second equation is the same update equation we had for a single instrument for which we have observed a trade. The first equation is more interesting, since it isolates the effect that an observation of a trade in an instrument has in our fair value estimation of a correlated instrument. As expected, the influence is proportional to the estimated correlation between the instruments, . The effect of the influence depends on the relative sizes of the variances in play. It helps to rewrite the equation as:

where is the linear regression coefficient between and . It provides an upper bound on the Kalman gain between the two instruments, which happens when the observation in the second instrument has no error . This makes sense, since in the absence of noise in the observation of the second instrument, the update equation becomes the best linear prediction of using .

Let us evaluate this model in one of the typical scenarios for fair value discovery discussed above: two correlated instruments traded in markets with some periods of non-overlapping trading. The objective is to leverage their correlation to estimate fair values for the instrument whose market is closed. The underlying principle is that new information affecting the price of the actively traded instrument during its market hours would similarly impact the closed-market instrument, if it were tradable.

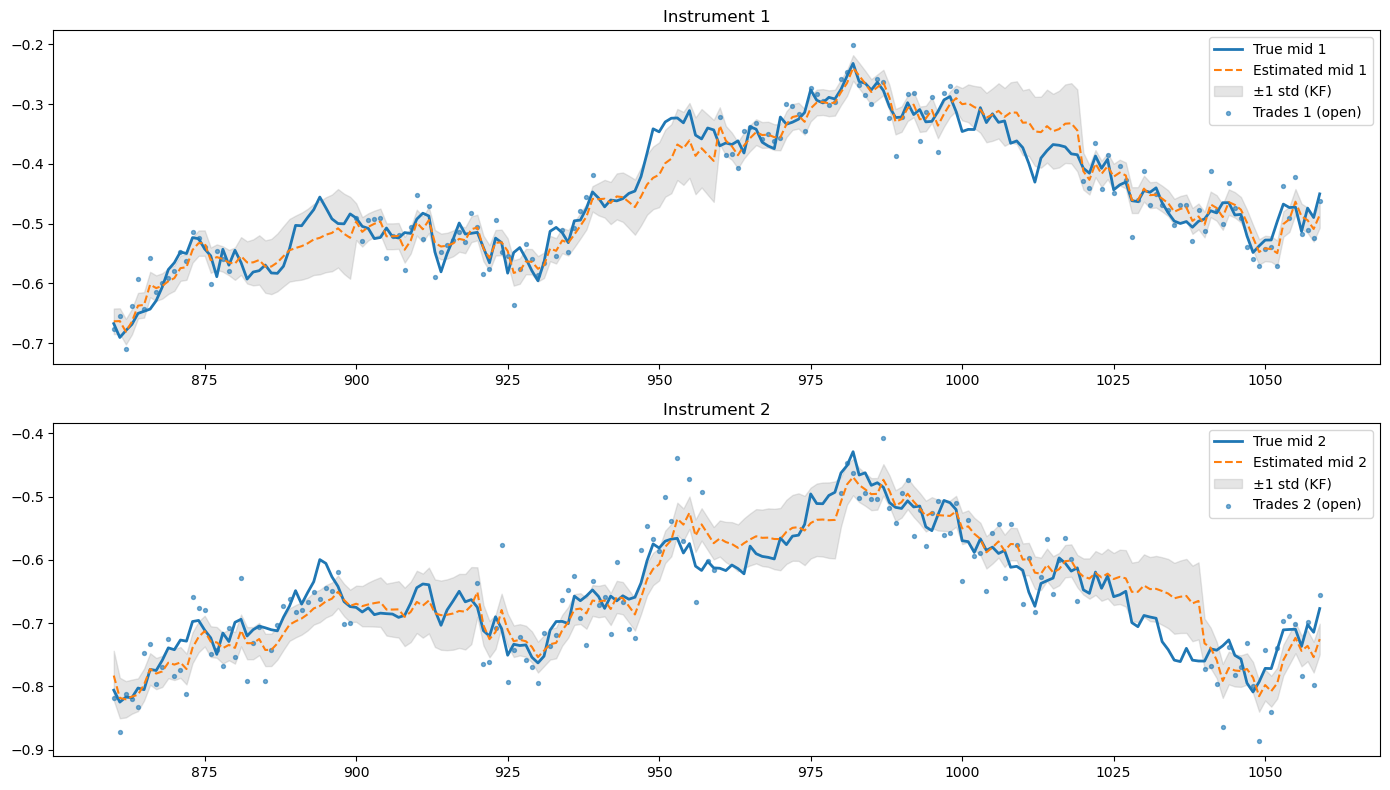

For that, we first generate synthetic fair values using a correlated Brownian motion with , and . Then we generate trades over 22 days but for each day, each day consisting on 60 time-steps to make the simulation efficient. We consider three situations: one in which only the first instrument is traded, one in which only the second instrument is traded, and a third one in which both are simultaneously traded. We use a diagonal observation covariance to generate the trades, i.e. we assume that there is no correlation between the spreads with respect to the fair value, so correlation is driven exclusively by fair value correlations. To generate the trades, we use standard deviations in the observation covariance of 0.032 and 0.045, respectively. Then we use Expectation Maximization (EM) over the first half of the synthetic trade data to estimate the parameters of the model, and run the Kalman filter over the second half of the data to compare the estimations of the fair value to the real simulated values. The results can be seen in the following figure:

Figure 1:Estimation of the fair value of instruments when their market is closed, using information from correlated instrument that trade at those times. The results are based on a simulation in which first the fair values are generated (blue lines) and trades (blue dots) are simulated when the market is open, which happens half of the day. Notice that a third of the day both instruments trade simultaneously. The orange line are the fair values estimated using the Kalman filter, which is trained with half of the data using EM and then run over the second half of the data. The figure focus on four days of test data.

As we see, the Kalman filter successfully exploits the correlation between instruments to update the fair value of the instruments when the market is closed. The updates are not perfect but they capture the overnight trends, improving over typical baselines like the closing price of the instrument. In general, the estimated fair values include the true fair values within one standard deviation, depicted as the shaded grey area in the figure.

Pricing sources and models¶

When it comes to feeding the Kalman filter with information to improve the estimations of the fair value, there is a variety of sources that is typically used. Let us discuss the most typical sources, those based on Limit Order Book (LOB) data and those based on Request for Quote (RfQ) data. As we have seen, these sources can be used both if they belong to the instrument of interest or correlated ones. However, there are some considerations to take into account when using correlated instruments, which we discuss in the end.

One point that will become a common theme in this section is the information content of the observations. Intuitively, not every source of pricing is equally informative. For example, as we discussed above, we expect that trades with larger volumes are more informative than small trades. In the same way, a cancellation of a limit order deep in a Limit Order Book might not carry meaningful new pricing information. These effects need to be incorporated case by case into the pricing model.

LOB traded¶

Limit Order Books (LOBs) are complex structures that contain extensive pricing information. Nevertheless, as discussed in Chapter Market microstructure, the primary references for price discovery are the best bid and ask quotes, the mid-price —defined as the average of these two—and the prices of executed trades. To improve robustness and reduce the impact of potential market manipulation, it is standard practice to compute volume-weighted averages of the first K levels on both the bid and ask sides, and to define a robust mid-price based on these averages.

Mid-price information¶

As mentioned above, a robust mid-price indicator in a LOB is:

where and are volumes and prices of bid and ask, respectively, and is the number of levels that are taken into account, for instance . We have omitted the time subscript in volumes and prices but they are also time dependent. Similarly, we can compute a robust bid-ask spread in the form:

which has to be positive since any bid and ask limit orders with the same price are automatically matched in the LOB. With this information, we can compute a simple model of fair value based on LOB information:

The choice of a Gaussian distribution is for simplicity, since it captures well our subjective view of the pricing information that the LOB contains, i.e. as we saw in chapter Bayesian Modelling, since we only identify the mean and variance as the constraints, the maximum entropy distribution associated with these constraints in the Gaussian distribution --admittedly, there is an extra constraint in the form of positivity of the fair value, but for prices far from the zero boundary we can safely omit this constraint. A more grounded criticism of this model could be that the Gaussian distribution allows the fair value to be beyond the best bid and ask prices, and those prices are tradeable: if the market consensus of fair value was beyond those prices, market participants would be willing to trade at those prices until they the fair value lies between the bid and ask spread. However, since liquidity might be thin at the first levels, and therefore those prices might not be available for bulk transactions, we consider a soft constraint as more appropriate, even when we use the robust bid-ask spread instead of the best bid and ask spread.

Of course, in many situations this pricing source might not be sufficient for actual applications, since in illiquid markets the bid-ask spreads are large and therefore the pricing source has a large uncertainty. In those situations is precisely where the Kalman filter model plays a part.

Using directly as an observation in the Kalman filter is problematic, since the feed is available in streaming and therefore, even if we limit the updates to the Kalman filter to the moments in which the LOB gets updates (i.e. arriving of new orders, cancellations, modifications), the pricing observations might jam the Kalman filter estimation, making the model consider that is a perfect source of pricing information. To see this, let us see the effect of a second observation following an initial one. Recall that the first predict plus update gave:

with:

If we approach the limit this simplifies to:

Let us apply a second predict plus update steps on top of this, keeping the limit:

Since:

then:

If we continue applying predict plus update steps in the limit, by using the induction principle we arrive at the following result:

If we now take the limit we converge to:

Therefore, in the case of LOB updates that don’t significantly provide new pricing information but happen with a high frequency, as in the case in LOBs for liquid instruments, the Kalman filter will converge to the mid-price with zero uncertainty.

A simple way to fix this issue is to estimate the Kalman filter with other pricing information (trades, RfQs, see next section) and combine it as two separate fair value estimations at any time. The best linear combination of two estimators is the one that minimizes the variance:

which is achieved for:

Notice that we have considered them independent estimators, otherwise there would be an extra term accounting for the correlation. Therefore, the best linear estimator is:

This makes intuitive sense: the smaller the relative error of one estimator compared to the other, the more weight the combined estimate assigns to it. Importantly, the resulting variance is lower than that of either individual estimator.

To check it, simply take the ratio of the final variance with any of the variances of the independent predictors, for example:

the equality happening when i.e. it was already a perfect predictor and, therefore, there is no way to improve the prediction.

Notice that, since the combined fair value estimator is reconstructed independently at each point in time —without relying on past estimates—it is not affected by the high-frequency sampling issue observed when using the LOB mid-price as an observation.

Trades¶

Trades happening in the LOB are a valuable source of pricing information, since they correspond to real transaction prices and not only interests to trade as limit orders. When a trade happens, the exchange reports publicly the time, the size and the price, but not the parties or the orders involved. The latter is particularly relevant since a relevant pricing information is the side (buy or sell) of the order that was aggressive, meaning the one that consumed the liquidity in the order book. As we discussed in Market microstructure, this can typically be a market order or a limit order at a price that is equal or better that prices available in the opposite side. Reverse engineering the side from a trade is an inference problem, and requires a model. A simple one widely used is the so-called tick-rule model which consists on comparing the price of the trade with the mid-price available in the order book just before the trade:

If the price of the trade is below the mid-price, then we assume it was an aggressive sell order, since the the trade price has to be an average weighted by size of the limit orders available. Therefore, it makes sense to assume the trade consumed liquidity in the side closer to the trade price using the mid-price as a reference.

If the price of the trade is above the mid-price, we assume it was an aggressive buy order

This model is not perfect, however. It does not account for hidden liquidity that might exist at more favorable prices than those displayed, which would alter the reference mid-price and, consequently, the trade classification logic. Moreover, it assumes a sequential processing of orders, whereas in practice, multiple orders may arrive simultaneously. In such cases, the exchange’s internal matching engine determines execution priority and order pairing through mechanisms that are not observable externally, meaning the apparent sequence of trades and quote updates in public data may not reflect the true matching process. This lack of transparency can lead to misclassification when applying the tick rule or similar models.

Once we have the relevant pricing information for the trade, namely time, side, size and or course price, it can be used to update the current fair value estimation of the financial instrument. The size information is useful to include some measure of information content in the order, as discussed in the simple pricing model section when introducing the Sinclair model: intuitively, a very small size trade should not be as relevant as a large size trade when updating our fair value estimation. Sinclair proposes to model a trade observation as a Gaussian random variable , where is the observed trade price at time , and is given by:

where is a constant to be estimated, and is an exogenous parameters that provides a typical scale for which trades are considered informative. It can be given by business prior knowledge or estimated from the statistical distribution of trade sizes. Notice that this error has the desirable properties of becoming zero at the scale and above, i.e. trades above this scale are considered maximally informative and the fair value is instantaneously updated to this value. It also becomes infinitely large as the volume tends to zero, which makes the Kalman filter model to essentially ignore those observations.

Sinclair’s model is not the only way to introduce this behavior into the model. Other choices of are also valid. For example, the model:

might be more realistic in the sense that volume adjusts the degree of information but it never completely ignores small trades, for which the error tends to . Another alternative that does not completely saturate for trades of large size is the following:

which tends to as .

So far we have not used the side information inferred from the tick-rule model. There are different ways this information can be factored into the pricing. In markets that are quite unbalanced, the observation of a trade in the opposite side where the market is prevalently trading might be considered more informative. This means potentially adjusting the error function with the side information. Another alternative is directly building separate Kalman filters for buy and sell information, which are then combined in a final fair value estimation using the model discussed in the previous section for optimally combining two predictors, although in this case neglecting correlation between bid and ask estimations might not be a solid modelling choice. We leave as an exercise to the reader to derive the optimal linear predictor with correlation.

RfQ traded¶

We have discussed the Request for Quote (RfQ) protocol in the chapter on Market Microstructure. In terms of pricing information, the main difference with LOBs is the asymmetry in information between the different participants in the process: dealers and clients. Let us focus on the case of negotiation via Multi-Dealer-to-Client platforms, which has the richer casuistic, from the point of view of the dealer, who is typically the one actively trying to calculate the fair value of the instruments. The pricing information that the dealer receives is the following:

Platform composites: the platform does not provide individual streaming bid and ask prices from other dealers, this is an information only available to clients. However, the platform typically offers a composite price, and index calculated aggregating the individual feeds from the dealers and applying proprietary rules. A composite typically consists on a stream of bid and ask prices that roughly represent average indicative or sometimes executable streamed prices from the dealers active in the platform. For example:

Bloomberg’s CBBT for bonds (Composite Bloomberg Bond Trader), using executable bid and ask streams from dealers

Tradeweb’s TW Composite, using both indicative and executable prices

In some cases, the platforms offer pricing feeds that already incorporate multiple pricing sources. For instance, Bloomberg offers BGN (Bloomberg Generic Price) for multiple instruments, which incorporates several pricing sources in the calculation, from dealer’s indicative prices, interdealer brokers, traded data from exchanges (if available), etc. Marketaxess offers CP+ (Composite plus) for bonds, using indicative and executable streams from dealers but adding also information from trades within its platform as well as reported data from trade repositories like Trax and TRACE (see below)

Traded price: when the dealer trades the RfQ (or ends tied), of course she has the information from the traded price.

Cover price: When a dealer wins the RFQ and executes the trade, the platform discloses the second-best price quoted by a competitor, called cover price.

Non pricing information: if the dealer quoted the second-best price, the platform informs them of this outcome, although it does not disclose the actual traded price. The dealer can, however, infer that the executed price must have been better than their own quote. Each participating dealer is also notified whether the client executed with another dealer or decided not to trade at all.

Apart from the pricing information gained via the trading platform, in order to improve transparency and price formation, there are private and public initiatives to share pricing information post-trade, particularly in the bond markets:

TRACE (United States): Operated by FINRA since 2002, the Trade Reporting and Compliance Engine (TRACE) requires broker-dealers to report transactions in corporate, agency, and certain securitized and Treasury securities. Reports must be submitted within minutes of execution, and a portion of the data is made public, providing prices, volumes, and timestamps. TRACE has become the main benchmark for post-trade transparency in U.S. fixed income and is widely used for valuation, best-execution monitoring, and market analysis.

MiFID II / APAs (European Union): Under the MiFID II and MiFIR framework, investment firms must publish details of OTC bond and derivative transactions through Approved Publication Arrangements (APAs). These entities disseminate standardized post-trade data on price, size, and time of execution, subject to deferrals for large or illiquid trades. Although the system has enhanced transparency across European markets, the coexistence of multiple APAs has led to fragmentation and the absence of a unified consolidated tape.

TRAX and Axess All (Europe): TRAX, originally created by ICMA and now operated by MarketAxess, serves as a trade matching and regulatory reporting system under MiFID II, EMIR, and SFTR. Building on this infrastructure, MarketAxess launched Axess All, a private transparency service that aggregates and anonymizes post-trade bond data to produce daily composite levels for European government and corporate bonds. While not an official regulatory tape, it provides a valuable commercial reference for post-trade pricing and market trends.

Other Regional Systems: Comparable frameworks exist in other jurisdictions. In the United Kingdom, the FCA maintains the MiFID-style APA regime and is developing a consolidated tape for fixed income. In Canada, the IIROC operates a corporate bond transparency service with public post-trade data similar to TRACE. In Asia, initiatives remain limited, though regulators in Japan and Singapore are exploring TRACE-like models to improve fixed-income transparency.

Composites¶

Modelling composites is similar to modelling mid-prices from order books, since they share in common the structure of information in terms of a continuous feed of bid and ask prices, only that for composites are indicative prices. Again, given that the frequent updates in the feed might not carry new pricing information, it makes sense to model it as an independent fair value source, that can be then aggregated with other estimations, like those using Kalman filters on trades and other information.

The model is therefore:

with:

where , are, respectively, the bid and ask composite prices published by the platform.

RfQs¶

The information from RfQs depends, as discussed above, on the final status of the dealer in the process. If the dealer wins the RfQ, the observation corresponds to a trade, and the modelization is overall similar to the one discussed in the context of trades in the LOB. There is, though, one key difference with the case of LOBs, in that the cover price is also informed to the dealer.

Intuitively, the closer the cover and the trade price, the more confidence we might put on the trade price as a pricing source, since we have the agreement from a second dealer quoting a similar level. Or put it in a different way, if the dealer wins the trade at a price much further than the cover price, it might imply a clear mispricing that needs to be adjusted, not a reliable pricing source. One way to incorporate this into the model is to make the observation error a function of the distance to the cover:

where is the distance to the cover, and a modulating function with a minimum at zero.

A second case is the one in which the dealer misses the RfQ, but the price was the second best quoted. The fact that among the number of dealers quoting competitively the price quoted was the second best carries some significant information that can be used to adjust the fair value. One way to do this is to fit a probabilistic model that predicts the distance to cover based on features available in the negotiation, for example, a simple linear regression model on :

where are the regression weights and . Alternatively, if we want to explicitly model that the distance to cover cannot be negative, we can use a log transformation, although then we will have to use a non-linear Kalman filter to account for this observation. Assuming the simple linear regression model, we can add the observation to the Kalman filter:

where depending on the side of the RfQ (buy or sell).

A third case happens when the client trades, the dealer misses the RfQ but her quote was not even the second best (cover). The information in this setup is much weaker, in the form of a bound to the traded price (the quoted price). Although, in principle, a probabilistic inference on the traded price could be performed using this information, the potential model risk coming from the plethora of model assumptions typically outweighs the information gains, so we will not dive deeper here.

Hit & Miss¶

Another source of pricing information comes from analyzing patterns of trading information from the dealer. In particular, it is useful to analyze the hit & miss, i.e. the ratio between won RfQs over the total traded by the client with any dealer (i.e. excluding those where the client did not trade at all), over a certain window of time. Intuitively, a dealer that has an abnormally high or low hit & miss might be using an incorrect fair value estimation from the quoting. Of course, the tricky question here is to assess what are the normal levels of hit & miss, which have to take into account the influence of factors like, for example, inventory levels in the spreads quoted: if a dealer has relatively high inventory holdings, it is likely that she will skew quotes to reduce inventory risk, hence the hit & miss will be higher if there are more quotes overall in the direction of risk reduction.

If the dealer has estimated a model that estimates the probability of winning the RfQs, and the model is well calibrated, it can be evaluated for the same windows of RfQs as the empirical hit & miss (H&M). If we compute the latter at time using the last RfQs, it is given by:

where is an abbreviation of the condition . For a well calibrated model, such empirical hit & miss should be close to the expected by the model:

Here, are the filtrations at the time of request of the i-th RfQ, which include the spreads quoted by the dealer:

with the price quoted for the i-th RfQ and the estimation of the fair value at time .

If we consider each RfQ independent of each other, the variance of the hit & miss provides us with a scale of natural variability of our estimation versus the empirical hit & miss:

Intuitively, then, if we get a persistent deviation between and that cannot be explained by the expected variability from the randomness of the RfQ process, this could be attributed to a potential bias in the estimation of , which becomes a further pricing source for our fair value estimation model. Further modelization is required, though, to inject this information into the Kalman filter, which goes beyond the scope of this book. For us, it suffices to point out how we can potentially convert hit & miss deviations from the target into pricing information.

Correlated instruments¶

As we discussed above, if a set of instruments exhibit historical price correlations and we have reasons to believe those are structural correlations, i.e. they will continue existing in the present, we can do a joint estimation of the fair values using a multivariate Kalman filter. This way, information about the price of one instrument can be used to improve the estimation of the others. The pricing sources for these instruments can be any of those discussed previously.

There are some caveats though to take into account when using this source of pricing information:

The first one is that correlations are inferred parameters and therefore themselves subjected to a certain amount of model risk. The Kalman filter model uses point estimations of the correlations for the updates, and therefore ignores the potential uncertainty associated to the estimation when updating the price. This issue can actually become quite relevant in certain situations, for example when estimating the price of illiquid instruments using more liquid ones. The liquid instrument will have a smaller estimation error than the illiquid one. If point correlation between the instruments is high, it can have a over-weighted influence on the estimation of the price of the illiquid instrument, overriding the intrinsic price dynamics of the illiquid instrument. A Bayesian treatment of correlation can overcome these issues, but then the Kalman filter estimation algorithm can no longer be used, requiring a numerical computation of posterior probabilities for the predict and update steps.

The second one arises when estimating the correlations to be used in the Kalman filter model. The typical estimation of correlations used in financial models uses synchronous pricing data, for instance end of day prices. However, rigorously speaking, the correlations used in the Kalman filter model for fair value estimation are between latent fair values, not price observations. A consistent estimation therefore requires us to compute them endogenously, for example using Maximum Likelihood Estimation or Expectation Maximization over the historical pricing data. The difficulty lies when defining estimators (e.g. MLE ones) for the covariance matrix of asynchronous data. The standard estimator is not valid and we must resort to other less standard estimators. This topic is discussed extensively in Guo et al., 2017, where they suggest using Fourier methods, among others. Again, as in the previous case, a Bayesian modelling approach for the covariance matrix provides the natural way to circumvent these issues, at the price of adding numerical complexity to the computation.

Fundamental models for fair value estimation¶

Fundamental models estimate the fair value of a financial instrument by analysing the value of their future cash-flows. Recall from our introductory chapter on financial markets Financial Markets, that financial instruments are essentially contracts that promise to pay back funds to the investor that purchases it under conditions specified in the contract.

The present value theory of fair value¶

Even the most simple financial instrument, a promise to pay back a deterministic amount of money in a future fixed date, requires some theoretical hypothesis to estimate its fair value. The basic idea is that a unit of currency received today is worth more than the same unit received in the future, because it can be invested in the interim. Consider a risk-free deposit that pays a deterministic interest rate . An amount of one unit invested today grows to units after periods, as far as interest rate payments are reinvested at the same rate (compounded interest). Conversely, receiving one unit in periods is economically equivalent to receiving units today. This opportunity cost argument implies that any future cash flow must be discounted by the factor to make it comparable with cash today. Such economic consideration is referred as the time value of money.

Formally, for an investment that delivers a future cash flow at time , the present value satisfies

where we have assumed that an interest payment of is paid each unit of time. The factor is called the discount factor, since it is used to discount future cash-flows. Present values becomes our fair value estimation within this framework.

A typical hypothesis that provides useful mathematical simplifications is that of continuously accrued interest rates. This is also a good approximation for real situations where interests are paid daily, for instance in money market funds. Consider an account that pays interest each time period of size . The interest paid is over a unitary notional. If we reinvest the interests, at time we have accumulated . If we now take the limit :

This gives us the expression of the discount factor for continuously paying interest rates, given by the inverse . Under this approximation, the present value of our simple financial instrument becomes:

Exponential functions provide a lot of mathematical simplifications, hence the usefulness of this limit, particularly when applied to the computation of fair value for more complex financial instruments.

If we also include the price paid for the financial instrument, let us say in this case we pay initially , we define net present value (NPV) as:

Notice that, therefore, a rational investor will only be willing to invest in this financial instrument if , otherwise its NPV would be negative.

We can now generalize this expression for a financial instrument that pays a stream of deterministic cash-flows at times . This is the case for example of standard government bonds issued by most countries. The fair value given by present value becomes then:

What happens if these future cash-flows are contingent to information not known at present day? For instance, we can have bonds that pay floating interest rates depending on reference rates that don’t get fixed until a future date. Shares pay dividends contingent to the financial results of the corporation that issues the shares. And derivatives are financial instruments whose value depends on the future price of an underlying instrument, hence the name “derivative”. A simple naive extension of the theory of present value would consider these future cash-flows as functions of random variables, replacing its future value by an expectation of future value:

where we have conditioned the expectation to the information available at the time of estimation, the filtration . If discount factors are not stochastic themselves (an argument we will revisit in the last section of this chapter), this becomes:

The issue with this approach is that, once future cash-flows become uncertain, their expected value is a point estimation of their possible range of values that neglects the rest of potential scenarios that can happen. Recall from our discussion in chapter Bayesian Modelling, that choosing to represent a random variable by their expected value in terms of decision theory (and fair value estimation is in the end linked to the decision to buy or sell a financial instrument) makes sense when the investor penalizes errors in the estimation using a square loss function. The behavior of real rational investors, though, shows a more asymmetric loss function, in which generally potential extra gains are valued less than the equivalent potential losses. This kind of behavior is better capture by using utility functions, as we will discuss in the next section.

The utility indifference theory of fair value estimation¶

To ground the discussion in another example, let us consider a specific case of a future uncertain cash-flow whose value depends on the price of another instrument at the time of payment, , for example a stock. The cash-flow is therefore . Notice that this is a specific case of a derivative’s contract. The function is called the pay-off of the derivative, the instrument whose price is is called the underlying of the derivative, and the time is the expiry date of the derivative. Apart from the value , it can also depend on other parameters that are deterministic. For instance, for a forward contract we have where is called the forward price; and for an European call option we have , where is called the strike of the option. An european put options has a payoff . There are also American options where the option can be exercised before the expiry date, which becomes itself a random variable.

This derivative pays in the future a quantity that is contingent to the future value of the underlying, whose value is known today but is uncertain in the future. To get an estimation, we need to use probability theory to put some bounds to our uncertainty, so we characterize by a random distribution function . As we saw in chapter Stochastic Calculus, a popular model that allows us to compute such future distribution is a random walk model or a geometric random walk, the latter being a natural choice for prices that cannot be negative. In those cases the future distribution can be computed, being a normal distribution in the first case, and a log-normal distribution in the second, e.g. in the case of stocks. Although for short expiries the random walk can also be a good model for stocks. These models allow us to get sometimes closed-form solutions, but more realistic models that capture better empirical distributions of prices can be used.

As mentioned in the previous section, we cannot just value this cash-flow using the expected value of the pay-off, since it would ignore the risk-profile of the investor. As discussed in more detail in chapter Stochastic optimal control, utility functions provide a mathematical formalism that allows us to capture realistic risk behaviors. Utility provides a description of the value that the cash-flows derived from the financial instrument have for the investor. Typical utility functions show the notion of marginally decreasing utility for increasingly larger cash-flows. In situations where cash-flows are random variables, such behavior models investors that are risk averse, meaning that they need to be compensated increasingly more to take on extra risk.

To apply the utility function framework to the problem of fair pricing, we need to compute expected utilities to characterize the value that the investor places on the contract. Using an exponential utility function for simplicity, this means:

where is the risk aversion coefficient of the investor. We have discounted the payoff at by the discount factor in order to consider the time value of money, as discussed in the previous section, although now we consider for generality a initial time .

The fair value in this formalism is the so-called premium of the derivative, denoted , that the investors is willing to pay (or be paid, depending on the pay-off function) to enter into the derivative’s contract today. This changes the utility calculation, since it needs to take into account the premium:

When modelling rational risk-averse agents with utility functions, we model their decisions as those that maximize the expected utility. However, in this case this cannot be used to compute the premium, since naturally the premium that maximizes utility is !. The problem is, of course, that it does not take into account the utility maximization of the dealer selling the derivative, who would not enter into the contract at this premium. The same framework could be used to model the dealer’s payoff, which is the reverse from the investor, albeit with a different dealer’s risk aversion, :

but even introducing the dealer’s utility function, how could we compute the value of the premium?

For the answer, we need first to frame the problem in other terms: what is the maximum premium that the investor would be willing to pay to enter into the contract? Since the alternative to not entering into the contract implies a zero payoff with total certainty, whose expected utility in this framework is , we can argue that the investor would be willing to buy the derivative as far as the premium makes him/her better off, i.e. . For a value of the premium such that , the investor is indifferent to buy or not buy. This value of the premium calculated this way is called the certain equivalence price of the investor, since it is the guaranteed payoff that the client would accept now rather than taking the chance of a higher, but uncertain, payoff. Of course the same computation could be done for the dealer, obtaining a different certain equivalence price. An agreement will only happen if the maximum premium that the investor is willing to pay is above the minimum premium that the dealer is willing to receive.

Let us first see the problem from the dealer’s point of view. In real situations, it is typically the investor who comes to the dealer and request a price for the derivative. The minimum premium that the dealer would be willing to accept to provide the contract as a reference for derivatives pricing, i.e. the certain equivalence price of the dealer, is the one that solves:

We can now obtain a general expression for the premium:

For those dealers that have zero risk aversion, i.e. they are risk neutral, by taking the limit we get:

And for small, but positive risk aversion:

We can derive the same expression for an investor; we get:

If the investor has a small but positive risk aversion:

We see immediately that , so there is only agreement if both investor and dealer are risk neutral, or at least one is sufficiently risk prone. Dealers are expected to be naturally risk averse, and some clients (speculative ones) will be risk prone, but not all. This would imply that derivatives transactions happen less often that what we empirically observe. What’s going on? There are two caveats to this discussion

Some clients might use derivatives to hedge their risks, i.e. they have an existing exposure into a risky instrument, and are willing to pay a premium to reduce the risk. This exposure needs to be introduced into the pricing framework to derive the correct certain equivalence price.

Dealers don’t simply passively take the opposite risk from the derivative contract. They actively hedge their exposure, changing as well the final payoff and therefore the price they are willing to accept.

Let us see a few examples to illustrate this.

Example: pricing of a simple contingent claim¶

A contingent claim is a contract that pays off only under the realization of an uncertain event. Many derivatives contracts like options are contingent claims. The most simple contingent claim pays 1$ under the realization of a specific uncertain event, and zero in all other cases. These contingent claims are called Arrow-Debreu securities, and have a theoretical interest since we could in principle decompose any contingent claim as a linear combination of these securities. Therefore, if we know the prices (premiums) of Arrow-Debreu securities, we could price any contingent claim. We say that in this case we have a complete market, where we can trade instruments linked to any future state of the market.

For our purposes, though, we just want to discuss a simple example of reservation prices. Let us consider a contingent claim in which the dealer pays the investor 1$ if we get heads when tossing a fair coin in the present. In our framework, the underlying now is the side of the coin, heads or tails, with probabilities . We also make since we toss the coin in the present. The value of the reservation price for the investor reads then:

For a risk-neutral investor, by making , we get simply , which makes sense: the investor is willing to pay 0.5$ to make the game fair. Or in other terms, to make the expected value of the game zero. A fully risk averse investor for whom has , i.e. only is willing to buy the contract when there is guarantee of no losses under any scenario. In the middle, the premium lies between those two values: the investor will be willing to pay more than 0$ to trade, as far as the payoff is skewed in its favor.

Example: Forward on a non-dividend paying stock¶

Let us now focus on a more realistic case and find the maximum premium that a risk averse investor would be willing to pay for a forward contract on a non-dividend paying stock [1]. The buyer of a forward has the obligation to buy a stock at the expire at a pre-agreed price . Therefore, the payoff function reads:

The maximum premium that the investor is willing to pay reads then:

Let us assume a Brownian motion model for the (non-dividend paying) stock:

where is the drift (expected revaluation), is the volatility and a Wiener process. Integrating this SDE we have . Notice that it is only a realistic model as far as , since otherwise the stock price could become negative in a relevant proportion of scenarios, which is not financially possible. The advantage of this model is that allows us to compute a closed-form for the premium, since the integral becomes the expected value of a log-normal random variable , which is known[^2]:

Forward contracts are usually priced choosing the forward price that makes the premium zero, so there is not cash transacted at the time of inception of the contract. In this case:

Unless the client has strong expectations of future returns of the stock (characterized by its drift ), a risk averse client will only accept a forward price smaller than the current spot price, given the risk penalty in the right side of the equation, which is negative.

As mentioned before, though, many clients buy forwards as a way to hedge their risk with respect to the underlying. For example, let us assume the client knows today that he will need to buy the stock at a certain time , and not before. This means the client is exposed to market risk, which could neutralize by entering into a forward contract. In this case, the client has to compare the utility of two different scenarios: in the first one, the client buys the stock at time at the market price available, . In the second one, he enters into a forward contract at time that expires at time . The forward price is agreed so that the client does not need to pay a premium at time . The expected utility of the first scenario is:

The expected utility of the second scenarios is now:

The certain equivalence forward price for the client can be obtained by equating both expressions:

As we would expect, now the risk-averse client is willing to pay a higher forward price to remove the exposure to market risk.

Example: European Call Options¶

The calculation for a forward was relatively tractable since the payoff of the derivative was linear on the stock. What about non-linear payoffs? This is the case for instance of an European Call option on a stock, which has the payoff:

where is the strike of the option. The risk-averse investor will be willing to pay the dealer a maximum premium of:

In the limit of a risk-neutral investor, the premium is:

Let us consider again the case of a non-dividend paying stock, but in this case we will model it a as Geometrical Brownian Motion to ensure negative prices are not allowed:

Integrating this SDE up to T:

where . We can further decompose the expression of the premium as:

Let us now change variables from to in the integration. The first integral becomes:

where we have defined the functions:

and is the cumulative distribution function of the standard normal distribution. The second integral is then:

Wrapping up all we get:

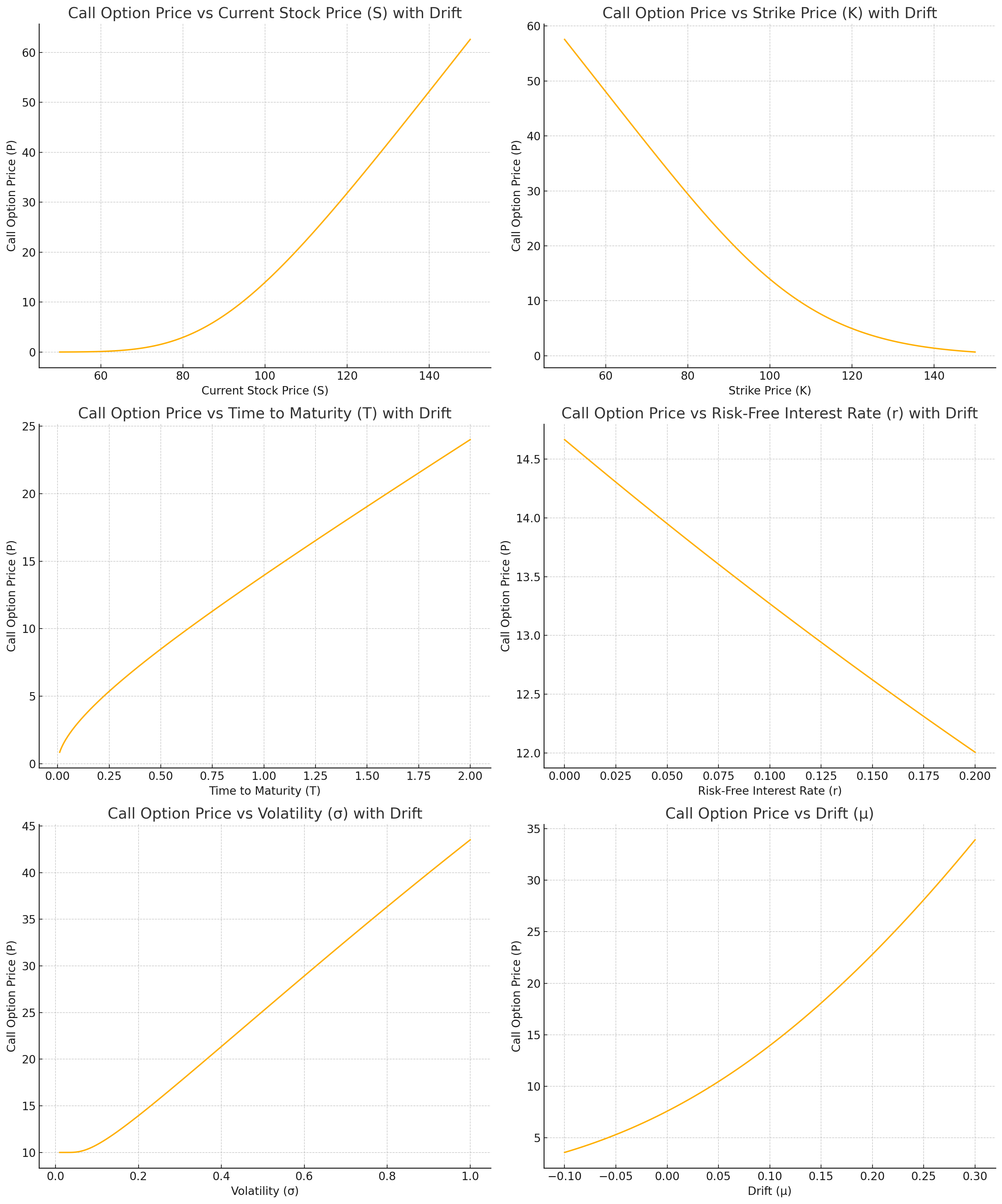

where we have used the property . Let us analyze this formula, which recall is the maximum premium that a investor is willing to pay for the call option. It depends on the following parameters:

Current stock price : as the current stock price increases, the premium increases. This is because the call option gives the right to buy the stock at the strike price, so a higher current stock price makes this option more valuable.

Strike price : as the strike price increases, the premium decreases. A higher strike price makes the option less valuable since it would cost more to exercise the option.

Time to maturity : as the time to maturity increases, the premium increases. More time to maturity means more opportunity for the stock price to move favorably.

Risk-free interest rate : as the risk-free interest rate increases, the premium decreases. A higher risk-free rate reduces the present value of the option payoff, making the option less attractive.

Expected stock drift : as the stock drift increases, the premium increases, since it is more likely that the option will be exercised.

Expected volatility : as volatility increases, the premium increases. Higher volatility increases the probability of the stock price moving significantly, which benefits the option holder.

We plot those dependencies in the following picture:

Figure 2:Dependencies of a call option premium for a risk neutral investor, derived as the maximum premium the investor is willing to pay (reservation or indifference price). We use the parameters , , in years, , , .

The arbitrage-free theory of derivatives pricing¶

So far we have looked closely at the pricing behaviour of clients who are willing to transact derivatives either because they want to bet on the market (risk prone) or hedge their exposure (risk averse). The dealers who are typically at the other side of the transaction are typically risk averse (as is expected for a banking business). However, if they were passively to take the opposite side of the bet, they would transact only against those risk prone or risk averse enough to accept the premiums they would be willing to accept.

In reality, as we anticipated above, dealers take on a more active role in order to handle their exposure to the risks of the derivatives they are transacting with the clients. There are mainly two ways a dealer will conduct this business:

If the market on derivatives is relatively liquid on both sides, with plenty of investors willing to take the long or short position in the derivative over periods much shorter than the expiry, the dealer will act as a market-maker of the derivatives. In this case, the dealer will quote bid and asks prices for the derivatives that compensate it for the provision of liquidity with its own capital, incurring potential inventory risk or information asymmetry risk

If the market is one-sided and / or illiquid, in the sense of having a small number of potential transactions before the expiry of the contract, the dealer will try to hedge the risk using other financial instruments available.

In practice, a combination of both situations will also happen often

As we discussed in the first part of this chapter, if we are in the first situation, we might not need a derivatives pricing model since we can extract the prices of derivatives directly from observations of trades or request for quotes that are not closed. It is the second case where we need a theory of derivatives pricing that takes into account potential hedging strategies that mitigate the risk of the dealer. We anticipate then that in this framework, the minimum price or premium that the dealer will accept to sell the derivative will be one that compensates it for the costs of hedging plus the residual risk.

More interestingly, we will see that in some cases, under certain theoretical situations, a perfect hedging strategy might exist, so the minimum price will be exactly the cost of the hedging strategy, which in this setup is also called the perfect replication strategy (since a perfect hedging implies no risk, and therefore a replication of the payoff using other financial instruments). A consequence of the existence of such replication strategy is that dealers are forced to price derivatives consistently, otherwise they would be generating risk-free arbitrage opportunities where other dealers who price correctly the derivative trade with the one mispricing it, and pocket the difference without risk. Hence, this theory of derivatives pricing is also called the arbitrage-free theory of derivatives pricing.

Let us revisit the case of forwards and options under this optic.

Example: Forward on a non-dividend paying stock¶

Under the modelling hypothesis used in the previous sections to value the premium of a forward, namely, that 1) the interest risk is locked during the period of the forward, 2) there is no counter-party risk, i.e. no risk that the investor will not satisfy its obligations, then there is actually a simple replication strategy that hedges all the risk of the contract. If the dealer is selling the forward to the investor, therefore guaranteeing a price to buy a share at time , then:

Borrows dollars in the repo monetary markets at interest rate during the period of the forward. We assume that interest accrues daily but is settled at the expiry. The stock bought is used as collateral in the repo.

Buys the underlying stock with this money at inception, paying

At the expiry of the forward it delivers the stock to the investor, receives and repays the loan plus remaining interests

If we consider that daily accruing of interest can be well approximated by continuous accruing, then the investor needs to repay at an amount . The payoff for the dealer at the expiry is therefore:

which is deterministic under the hypotheses of the model. A rational dealer of course will not accept a determinist loss, so the minimum premium that will command for this contract is the discounted value of this payoff:

In practice, forward markets work by quoting the strike such that the premium is zero, hence:

This is the arbitrage-free price of a forward contract. As mentioned above, it is called arbitrage-free since any other price would represent a risk-free arbitrage opportunity for other dealer. For example, let’s assume this dealer quotes a . Another dealer could buy the forward from the dealer, and use the opposite replication strategy:

Borrow the stock in repo and sell it in the market at price to fund the repo.

At time close the repo with the stock delivered by the dealer selling the forward, receive in cash and pay .

The payoff for this dealer is then:

i.e. a risk-free profit!

Example: European Call Options¶

In the case of options there is no such obvious static replication strategy if we are only allowed to use the underlying stock and repo contracts. By static replication strategy we mean that we don’t need to modify the positions of the replication portfolio (the stock and the repo) during the life of the forward. If we are able to trade other derivatives there is actually a static replication strategy. If the dealer sells the call option to an investor, then immediately

Buys from another dealer a put option with the same strike and expiry

Buys from another dealer a forward with the same strike and expiry

The payoff at the expiry is:

meaning that the replication portfolio of a put and a forward replicates the call option. At inception, the dealer is paid for the call a premium and pays for the put and for the forward, hence the payoff at inception is:

In order to avoid losses, the minimum premium that must command is therefore:

which is called the put-call parity relationship. The premium for the forward can be derived as discussed in the previous section, however we are left with a sort of chicken and the egg problem with regards to the call and put premiums: given one, we can determine the other, but we don’t have yet a replication strategy for the put to derive the call premium, and vice-versa.

If no static replication is available, is it possible to find a dynamical one that reproduces the payoff without uncertainty? Or are we left with strategies that, though they might minimize uncertainty, they don’t remove it and therefore we need to go back to our utility indifference theory?

The Black - Scholes - Merton Model¶

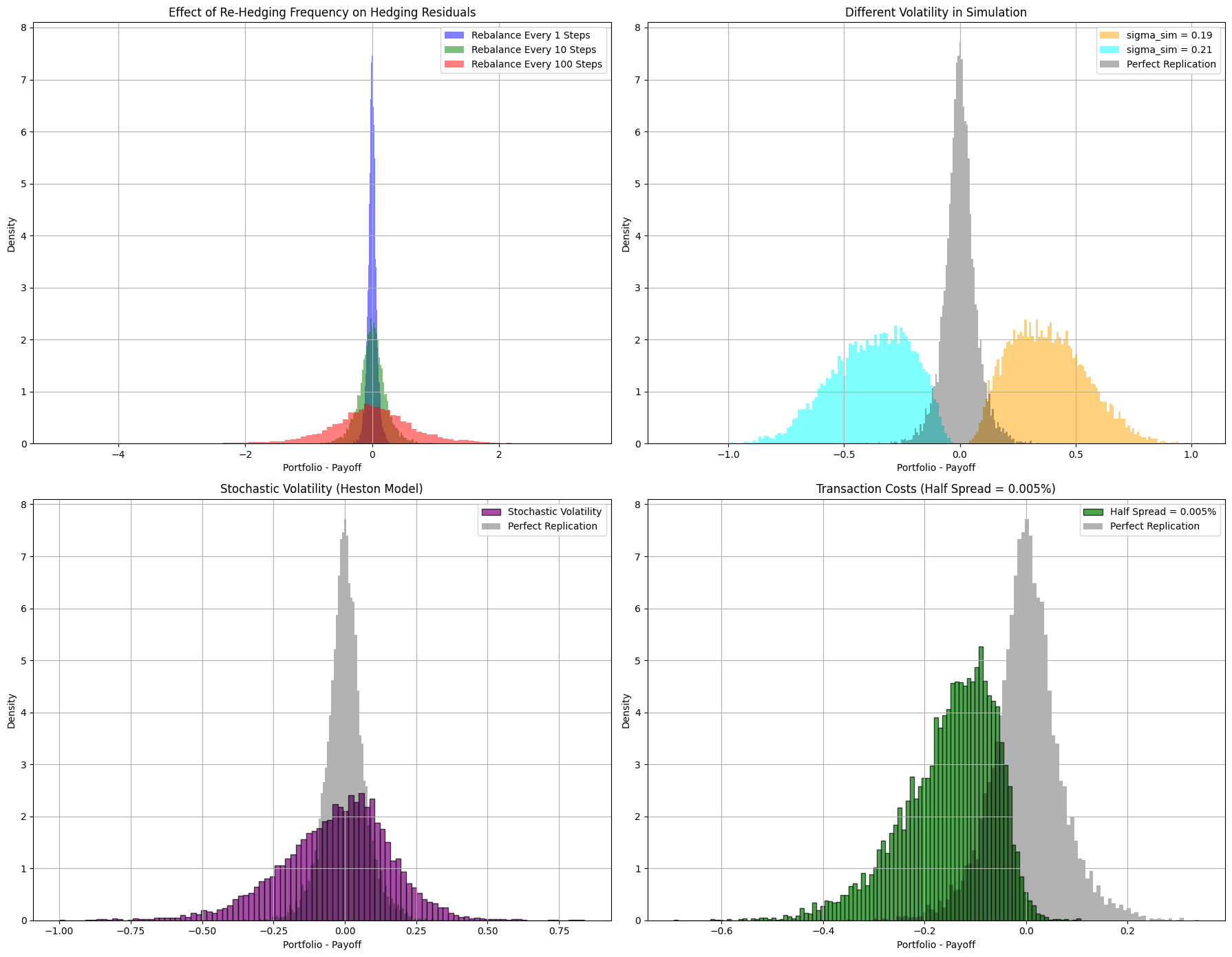

Fisher Black and Myron Scholes Black & Scholes, 1973, and separately Robert Merton Merton, 1973 provided an answer to this question: under certain theoretical conditions, we can indeed find a dynamic replication strategy based on the underlying stock and a risk free account, that reproduces the payoff with no uncertainty. The main conditions are the following:

The stock price follows a Geometric Brownian Motion dynamics:

The replicating portfolio is composed of a position in the underlying stock and a cash account from which we can borrow or lend money freely at a determinist risk-free rate :

The position in the stock can be adjusted continuously over the life of the option, without restriction like market trading hours, etc. There are also no transaction costs when buying or selling the stock. There are no restrictions on shorting the stock.

The replication portfolio is self-financing, meaning that no cash is added or withdrawn from the portfolio after the initial investment. Any proceeds from selling at immediately reinvested in the portfolio. Mathematically, it means that:

There is no counterparty default risk, i.e. the investor and the dealer will pay whatever obligations they have at the end of the option

If the portfolio indeed replicates the option payoff at the maturity in any scenario, we must have , where we have considered a Call option but the argument applies to any other payoff function. But since by construction the portfolio is self-financing, then if such dynamic strategy exists we must have for any time . Otherwise, there would be a risk-free arbitrage opportunity, e.g. selling the option at price in the case , buying with the cash from the premium the replicating portfolio and making an instantaneous gain of the difference . The same reasoning applies , only in this case we buy the option paying a discounted premium. Therefore, if we can find such strategy we immediately solve the problem of option pricing, since the premium is equal to the cost of replication by using the non-arbitrage opportunity argument.

The condition is equivalent to and . Additionally, this equality implies that , i.e. the premium of the option has to be a function of time and the contemporary value of the stock. We can then use Ito’s lemma to further understand the requirements for such strategy to exist:

Grouping the terms dependent on and separately we have:

Since the equality must apply for any arbitrary and , each term in parenthesis must cancel separately. Starting with the right hand term we have:

which is call the delta-hedging condition, given its obvious connection with linear hedging strategies where we try to neutralize the exposure of a financial portfolio to a risk factor like the stock price in this case. Delta hedging the portfolio we ensure that its value is independent on the random dynamics of the stock, at least during an infinitesimal time. However, a strategy that delta-hedges at every time the portfolio is not necessarily self-financing, i.e. we might need to add extra cash during the life of the portfolio. The problem is that a priori the cash required for delta-hedging would depend on the path of the stock, making it a random variable. In that case the premium would also be a random quantity with a certain distribution.

In order to have a deterministic premium the portfolio has to be self-financing, which requires that the first term of the equality above is also zero, namely:

where we have used the self-financing condition . The resulting equation is the celebrated Black-Scholes-Merton (BSM) partial differential equation. Solving this equation with the terminal condition allows us to compute the value of the premium for any time deterministically. Therefore, by virtue of the replicating portfolio and non-arbitrage opportunity arguments, if the Black-Scholes-Merton conditions are satisfied the price of an option is no longer a random quantity as in the utility indifference framework.

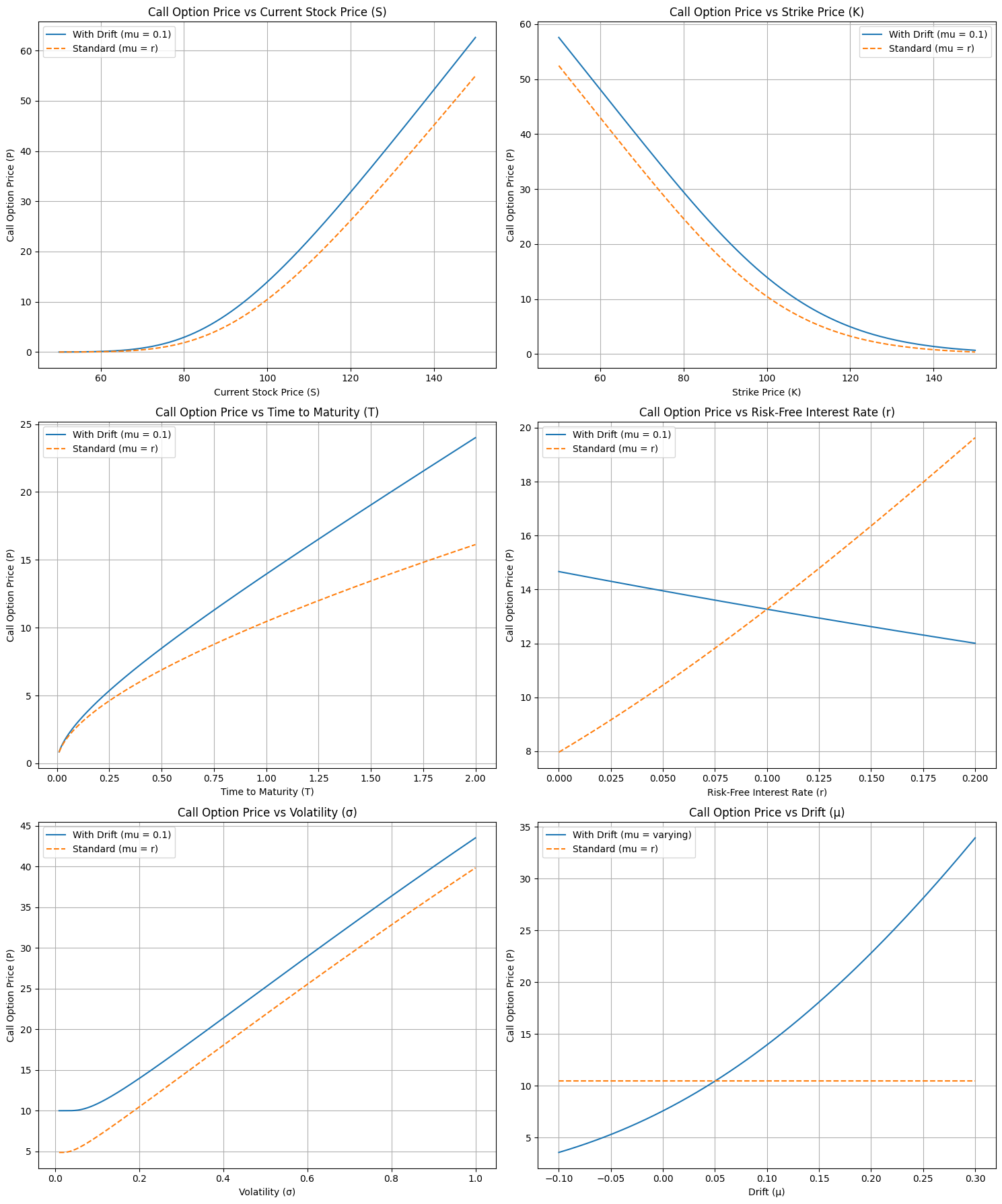

Before discussing the solution to this equation, we can get another insight simply by inspecting it: the option premium does not depend on the drift of the stock, only the volatility. In the utility indifference framework, the estimation of the drift plays a big role in the price that the investor is willing to pay for the option, since it affects the probability of exercising or not the option. However, for a dealer pricing the option, as far as the BSM framework holds, the directionality of the market is irrelevant since the strategy guarantees a replication of the payoff in any scenario by investing the premium into the BSM dynamic portfolio and implementing the dynamic strategy.

Solving the Black-Scholes-Merton equation¶

There are different ways to solve the BSM equation. Most introductory textbooks on the topic (see for example Joshi, 2003, Wilmott, 2007) follow the derivation used in the seminal paper that uses an ansatz for the solution that transforms the equation into the heat-equation, whose analytical solution is well-known Evans, 2010. Here, we will take a different approach and use the Feynman - Kac theorem introduced in Chapter (#stochastic_calculus), section (#feynman_kac). Recall that the Feynman - Kac theorem provides a general solution to a family of partial differential equations in term of an expected value. In the interest of the reader we review it again here: the solution to the PDE

with the boundary condition , is the following expected value

where satisfies the general stochastic differential equation:

The BSM equation is a specific case of this general PDE where , , , , . The solution of the BSM equation can be expressed as:

where satisfies the SDE: